When CodeRabbit Catches What Agents Miss

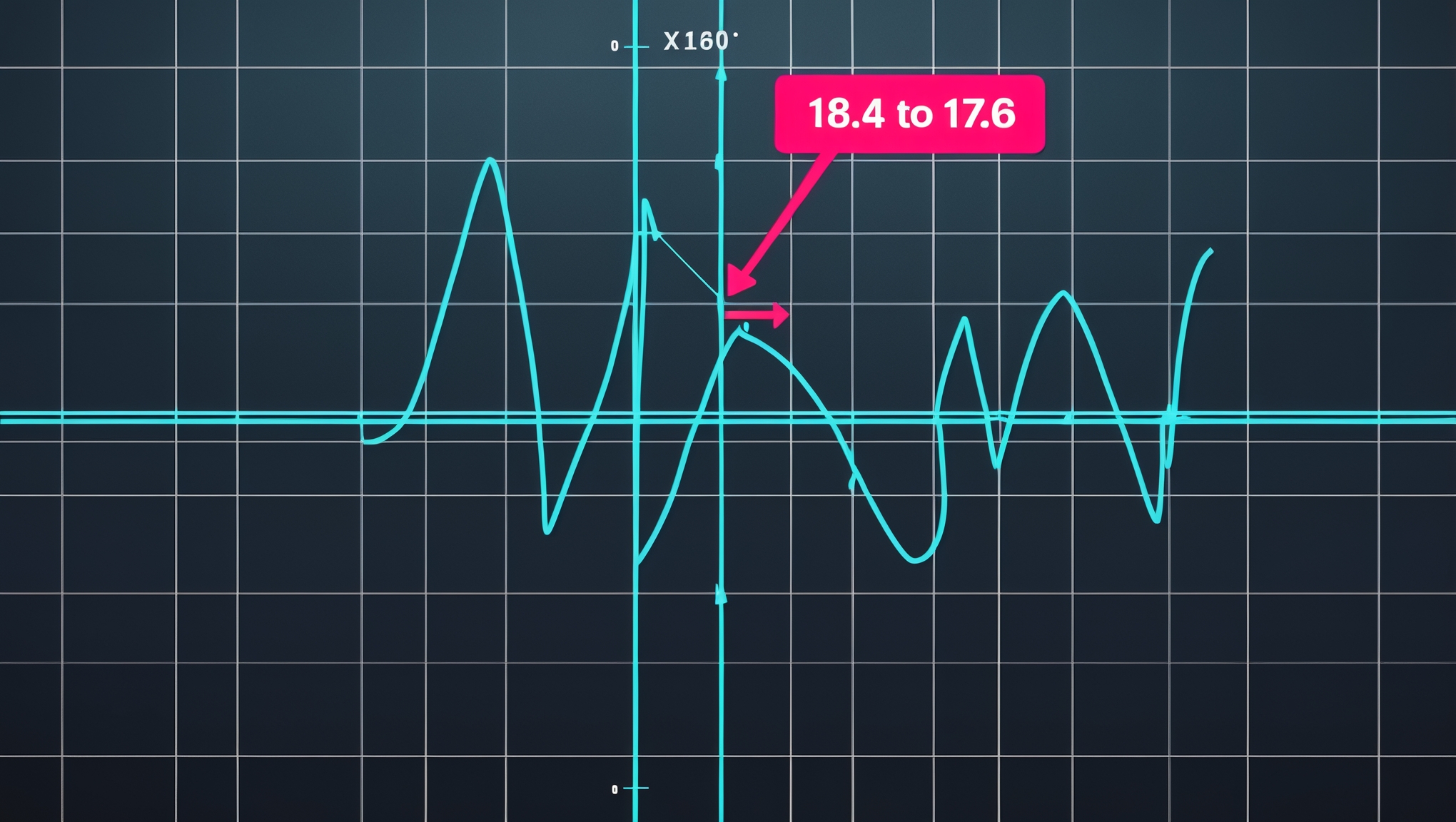

A piecewise scoring function had a hidden discontinuity at the boundary: score of 18.4 on one side, 17.6 on the other. No test caught it. Three agents reviewed the code and missed it. CodeRabbit flagged it in a review comment. This is why reviewers still matter in the agent era.

The calibrator rewrite looked clean. Three agents had reviewed the diff. The unit tests passed. CI was green. The math reviewer agent had walked through the rubric and signed off. The PR was one click from merge when CodeRabbit left a comment that stopped the session cold.

The comment was about a single line. It pointed at a boundary condition in a piecewise scoring branch and asked, in the polite CodeRabbit voice, whether the score on either side of that boundary was actually continuous. I ran the numbers. At the boundary — the exact input value where the branch switched — the score jumped from 18.4 to 17.6. A 0.8-point discontinuity, downward, at a point where the function was supposed to be monotonically increasing. Nobody had seen it. No test exercised the exact boundary value. Every agent in the review chain had looked past it.

This post is about why agents miss math bugs at branch boundaries, why static reviewers catch them, and why the right answer in the agent era is not "use more agents" but "keep the reviewers and take their comments seriously."

The Specific Bug

The calibrator was a piecewise linear function of a probability ratio input. Think of it as three segments glued together, with different slopes on each segment. The code looked roughly like this:

def calibrate(ratio: float) -> float:

if ratio < 0.15:

return ratio * 120.0 # segment A: steep

elif ratio < 0.40:

return 18.0 + (ratio - 0.15) * 40.0 # segment B: medium

else:

return 28.0 + (ratio - 0.40) * 12.0 # segment C: shallow

At ratio = 0.15, segment A evaluates to 0.15 * 120 = 18.0. Segment B evaluates to 18.0 + 0 * 40 = 18.0. Those look continuous. But the real function had been simplified from a longer form that included a nonlinear correction term, and during simplification the constant on segment B had drifted. The actual code used 18.4 as the segment-B constant and 17.6 / 0.15 as the segment-A slope. At ratio = 0.15, segment A evaluated to 17.6 and segment B evaluated to 18.4 — a downward jump of 0.8 on a rubric whose maximum contribution was 25.

In practical terms: two signals with ratios of 0.14999 and 0.15001 — which should score almost identically — scored 17.6 and 18.4. The function was not monotone across the boundary. Inputs arbitrarily close to each other produced scores 0.8 apart in a 25-point component. That is not a rounding error. That is a real bug in the shape of the function.

Why The Agents Missed It

Three separate agents reviewed that file. Two of them were general code reviewers. One was a math reviewer specifically tasked with verifying the scoring rubric. None of them flagged the discontinuity. When I went back and audited the review trace, the pattern was consistent across all three.

Agents reading a piecewise function tend to verify the branches individually. They check that each branch does what its comment says it does. They check that the arithmetic within each branch is correct. What they do not reliably do is evaluate the function at the exact boundary input and compare the outputs on either side. The boundary case is a property that exists between branches, not within one. Agents trained on line-by-line review are very good at line-by-line bugs. They are substantially worse at the bug that lives in the seam.

The unit tests had the same blind spot. The test suite had cases for ratio = 0.10 (segment A), ratio = 0.25 (segment B), and ratio = 0.60 (segment C). It had no case for ratio = 0.15 exactly, or ratio = 0.14999, or ratio = 0.15001. The branches were tested. The seams were not. This is a remarkably common test-design failure and it is why piecewise functions eat scoring systems for lunch.

Why CodeRabbit Caught It

CodeRabbit's comment did not come from running the code. It came from reading the structure of the function and asking a question that agents rarely ask: does this function preserve continuity at the branch boundary? That question is the right one for any piecewise numeric function, and it is the question that makes discontinuity bugs fall out immediately. Once you are asking "does segment A equal segment B at the boundary value?" the arithmetic is trivial. You evaluate both expressions at the boundary and compare. If they differ, you have found the bug.

This is not a question that gets asked by accident. It is a question that gets asked because the reviewer has an explicit mental model of the failure mode and is scanning the code for places where the failure mode could hide. CodeRabbit is tuned for exactly those kinds of structural questions on math-heavy code. Agents focused on implementation correctness are not. Both kinds of review matter, and they catch different categories of bugs. Removing either one leaves a hole.

The Test That Should Have Existed

The fix for the bug was a one-line correction to segment B's constant. The fix for the process was more important: every piecewise function in the scoring engine now has an explicit continuity test. The test is trivial to write. It looks like this:

def test_calibrate_continuity_at_boundaries():

boundaries = [0.15, 0.40]

epsilon = 1e-9

for b in boundaries:

left = calibrate(b - epsilon)

right = calibrate(b + epsilon)

assert abs(left - right) < 1e-6, (

f"Discontinuity at ratio={b}: "

f"left={left}, right={right}, delta={abs(left - right)}"

)

Four lines of test logic. A loop over every boundary value in the function. Evaluation just below and just above each boundary. Assertion that the outputs agree to within numerical precision. This test would have caught the 0.8-point discontinuity on the first run. It would have failed the PR. It would have prevented the bug from ever reaching CodeRabbit, because the test would have reached it first.

The discipline here generalizes. For any piecewise numeric function — in a scoring engine, a classifier, a price model, a signal rubric in the InDecision Framework — write a continuity test at every branch boundary. The test takes three minutes to write. It catches a category of bug that unit tests normally miss. And unlike most tests, it does not need to be updated when the function changes: the boundaries are extracted from the function's own structure, and the assertion is a universal property, not a hand-coded expected value.

The Broader Point

The lesson I keep coming back to from this session is not "CodeRabbit is better than agents" or "agents are unreliable." Both of those framings miss the point. The lesson is that review layers catch different bugs, and stripping out the review layer you think is redundant is the fastest way to ship the bug you were not looking for.

Agents are excellent at implementation correctness. They are worse at structural properties that live between lines of code. Static reviewers like CodeRabbit are excellent at structural properties and less reliable at nuanced implementation semantics. Human reviewers catch the bugs that neither category can see because they hold product intent in their heads. You need all three. The cost of running all three is far lower than the cost of a discontinuity bug in a load-bearing scoring function that silently produces the wrong rankings for weeks.

The agent era has made it tempting to strip review layers on the theory that the agent's own correctness check is sufficient. It is not sufficient. It is powerful, it is fast, and it catches most bugs — but the bugs it misses are the structural ones that hide in seams. Those bugs need a different kind of reviewer. Keep CodeRabbit in the loop. Read its comments carefully, especially the ones that sound like questions. The next time CodeRabbit asks whether a boundary is continuous, the answer matters more than the friction of checking it.

Our calibrator ships with a continuity test now. It took three minutes to write. It would have saved a CodeRabbit round trip, a branch revision, and a near-miss on a bug that had already passed three agent reviews. The agents are not going away. Neither are the reviewers. Both of them catch different things, and both of them are required if the thing you are shipping is a scoring function that real capital will follow.

Explore the Invictus Labs Ecosystem

Follow the Signal

Intelligence dispatches, system breakdowns, and strategic thinking — follow along before the mainstream catches on.